Apparently the Pro-Warden of Research and Enterprise at my institution discovered the ‘GPA intensity’ measure for ranking Universities: or at least saw fit this morning to circulate to all staff a message saying

This league table both confirms and affirms Goldsmiths as a research-intensive university within an inclusive research culture – something we can all be proud of.

which is at least sort-of true – as I suggested last night, I think an intensity measure is just about on the side of the angels. One flaw in the REF process is the element of choice in who to submit, though there are problems in just disallowing that choice, including the coercion of staff onto teaching-only contracts; maybe the right answer is to count all employees of Universities, and not distinguish between research-active and teaching-only (or indeed between academic and administrator): that at least would give a visibility of the actual density (no sniggering at the back, please) of research in an institution.

As should be obvious from yesterday’s post, I don’t think that the league table generated by GPA intensity, or indeed the league table by unweighted GPA, or indeed any other league table has the power to confirm anything in particular. I think it’s generally true that Goldsmiths is an institution where a lot of research goes on, and also that it has a generally open and inclusive culture: certainly I have neither experienced nor heard anything like the nastiness at Queen Mary, let alone the tragedy of Stefan Grimm at Imperial. But I don’t like the thoughtless use of data to support even narratives that I might personally believe, so let’s challenge the idea that GPA intensity rank is a meaningful measure.

What does GPA intensity actually mean? It means the GPA that would have been achieved by the institution if all eligible staff were submitted to the REF, rather than just those who actually were, and that all the staff who weren’t originally submitted received “unclassified” (i.e. 0*) assessments for all their contributions. That’s probably a bit too strong an interpretation; it’s easy to see how institutions would prefer not to submit staff with lower-scoring outputs, even if their scoring would be highly unlikely to be 0*, whether they are optimizing for league tables or QR funding. So intensity-corrected GPA probably overestimates the “rank” of institutions whose strategy was biased towards submitting a higher proportion of their staff (and conversely underestimates the “rank” of institutions whose strategy was to submit fewer of their staff).

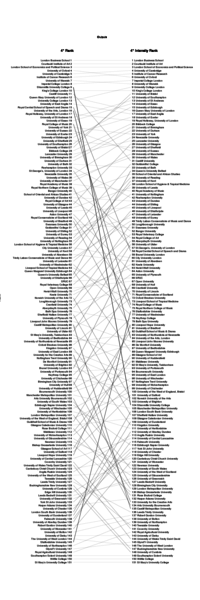

It probably is fair to guess, though, that the unsubmitted staff don’t have many 4* “world leading” outputs (whatever that means), honourable exceptions aside. So the correction for intensity to compare institutions by percentages of outputs assessed as 4* is probably reasonable. Here it is:

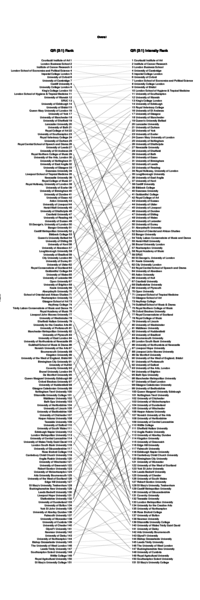

Another case where an intensity correction is probably reasonable is when comparing institutions by some combination of their 4* and 3* scores overall (i.e. including Impact and Environment): this time, because the 4* and (probably to a lesser extent) 3* scores will probably be one of the inputs to QR funding (if that carries on at all), and the intensity correction will scale the funding from a per-submitted-capita to a per-eligible-capita basis: it will measure the average QR funding received per member of staff. As I say, we don’t know about QR funding in the future; using the current weighting (3×4*+1×3*), the picture looks like this:

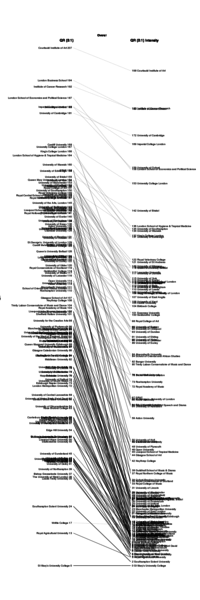

But wait, does it make sense to rank these at all? Surely what matters here is some kind of absolute scale: small differences between per-capita QR funding at different institutions will be hardly noticeable, and even moderate ones are unlikely to be game-changers. (Of course, institutions may choose not to distribute any QR funding they receive evenly; such institutions could be regarded as having a less inclusive research culture...). So, if instead of plotting QR ranks, we plot absolute values of the QR-related “score”, what does the picture look like?

This picture might be reassuringly familiar to the UK-based research academic: there’s a reasonable clump of the names that one might expect to be characterized as “research-intensive” institutions, between around 89 and 122 on the right-hand (intensity-corrected) scale; some are above that clump (the “winners”: UCL, Cambridge, Kings London) and some a bit below (SOAS). Of course, since the members of that clump are broadly predictable just from reputation, one might ask whether the immense cost of the REF process delivers any particular benefit. (One might ask. It’s not clear that one would ever get an answer).

I should stop playing with slopegraphs and resume work on packaging up the data so that other people can also write about REF2014 instead of enjoying their “holidays”.